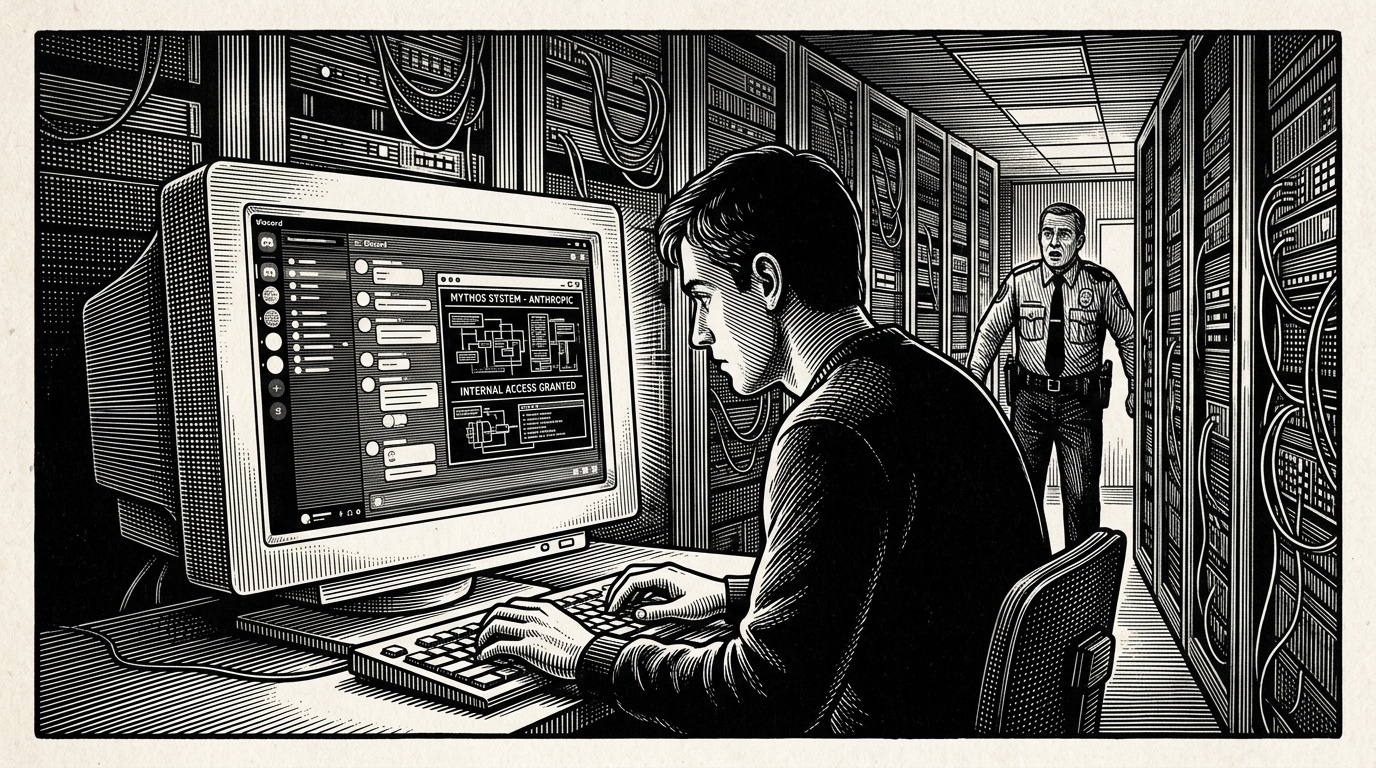

Unauthorised users gained access to Anthropic's internal system known as Mythos through Discord, according to reporting by Wired, as cybersecurity chiefs from companies with legitimate access to the tool simultaneously warned that its broader rollout will require close coordination between governments and private industry to remain secure.

A group of Discord-based investigators gained unauthorised access to Mythos, an internal tool developed by Anthropic, the AI safety company behind the Claude family of large language models, Wired reported on Friday. The breach, uncovered by journalists Matt Burgess, Lily Hay Newman, and Andy Greenberg, represents one of the more significant known intrusions into a major AI laboratory's internal systems in recent memory.

Anthropologic has not publicly detailed what Mythos contains or what data may have been exposed, but the incident has drawn immediate concern from the broader AI and cybersecurity community. The timing is notable: on the same day the breach was reported, the Financial Times reported that cybersecurity executives from companies with authorised Mythos access issued a collective warning urging joint defence of the infrastructure surrounding the tool.

Those security chiefs cautioned that Mythos's rollout to a wider set of partners would require unusually close coordination between businesses and government agencies to prevent exploitation. The convergence of the two stories — an active breach and an industry-wide warning about exactly such risks — has intensified scrutiny of how AI companies manage access to sensitive internal systems.

The incident is part of a broader pattern of digital security concerns highlighted in this week's reporting. Wired also noted that surveillance firms have been exploiting weaknesses in global telecommunications infrastructure to track individuals, while approximately 500,000 UK health records reportedly appeared for sale on Alibaba. Apple separately issued patches addressing a vulnerability in its notification system that could expose user data.

The Anthropic breach stands out, however, given growing concern across governments and industry about the security posture of frontier AI labs, whose internal systems may hold sensitive model weights, safety evaluations, and proprietary research. A successful intrusion — even by ostensibly amateur actors coordinating via Discord — underscores that AI companies face the same threat landscape as any major technology firm, and may increasingly attract targeted attention as their systems grow in strategic value.

Anthropologic had not issued a public statement at the time of publication. It remains unclear whether Anthropic has notified relevant regulators or whether any data was extracted during the unauthorised access.

Analysis

Why This Matters

- The breach of an AI laboratory's internal system by non-state actors signals that AI companies are now high-value targets for hackers, industrial spies, and curious investigators alike — raising urgent questions about how these firms protect sensitive infrastructure.

- Simultaneous calls from industry insiders for government-business coordination suggest Mythos is not a peripheral tool but something with broader strategic significance, possibly tied to AI deployment partnerships or safety evaluations.

- If sensitive model data, alignment research, or deployment configurations were exposed, the downstream implications for AI safety and competitive secrecy could be significant.

Background

Anthropologic, founded in 2021 by former OpenAI researchers including Dario and Daniela Amodei, has grown into one of the most closely watched AI safety organisations in the world. Its Claude models compete directly with OpenAI's GPT series and Google's Gemini, and the company has attracted billions in investment from Amazon and others.

The company has historically maintained a relatively low public profile on internal tooling and infrastructure. Mythos appears to be an internal system shared selectively with external partners, though its precise function has not been publicly documented. Its name had not featured prominently in public reporting before this week.

The broader cybersecurity context is significant. AI labs have increasingly been identified as targets by state-sponsored threat actors, with the US government and allies warning in recent years that foreign intelligence services are actively seeking to steal AI model weights and research. The idea that amateur investigators using consumer platforms like Discord could gain access adds a new and less predictable dimension to the threat picture.

Key Perspectives

Anthropic: The company has not publicly commented on the breach. Its stance on security has historically emphasised responsible disclosure and safety-first development, but the incident may prompt a review of access controls for partner-facing systems.

Industry cybersecurity chiefs: According to the Financial Times, executives with authorised Mythos access are urging a coordinated response involving both governments and businesses. Their position reflects concern that the tool's planned expansion increases the attack surface and that no single company can defend it alone.

Critics and security researchers: The fact that Discord-based investigators — rather than sophisticated state actors — were able to gain access raises fundamental questions about whether Anthropic applied sufficiently robust access controls to Mythos. Security analysts may argue the breach reflects an underinvestment in operational security common across the AI industry, where product development has often outpaced security infrastructure.

What to Watch

- Whether Anthropic issues a public disclosure detailing what data Mythos holds and what, if anything, was accessed or exfiltrated during the breach.

- Any regulatory response from UK, EU, or US authorities, particularly given parallel stories about health record sales and telecom exploitation surfacing in the same news cycle.

- The outcome of industry discussions about coordinated Mythos defence — specifically whether any formal government-industry framework emerges, which would be a meaningful precedent for AI infrastructure security governance.