What they did

The authors built OmniBehavior, a benchmark that integrates real-world human behavioral traces across multiple scenarios (not just one isolated task), capturing long-horizon sequences and heterogeneous action types within a unified framework. Unlike prior benchmarks that rely on synthetic data or narrow action spaces, OmniBehavior draws entirely from authentic human behavior records, preserving the cross-scenario causal dependencies that characterize real decision-making.

Using this benchmark, they evaluated multiple state-of-the-art LLMs on their ability to simulate realistic human behavior, systematically comparing the structural properties of simulated versus authentic behavioral traces.

Key findings

- Previous benchmarks with isolated scenarios suffer from "tunnel vision" — they miss the long-term, cross-scenario causal chains that drive real-world human decisions, and the authors provide empirical evidence that these dependencies matter significantly.

- Current LLMs struggle to accurately simulate complex real-world behaviors, with performance plateauing even as context windows are expanded, suggesting the bottleneck is not simply context length.

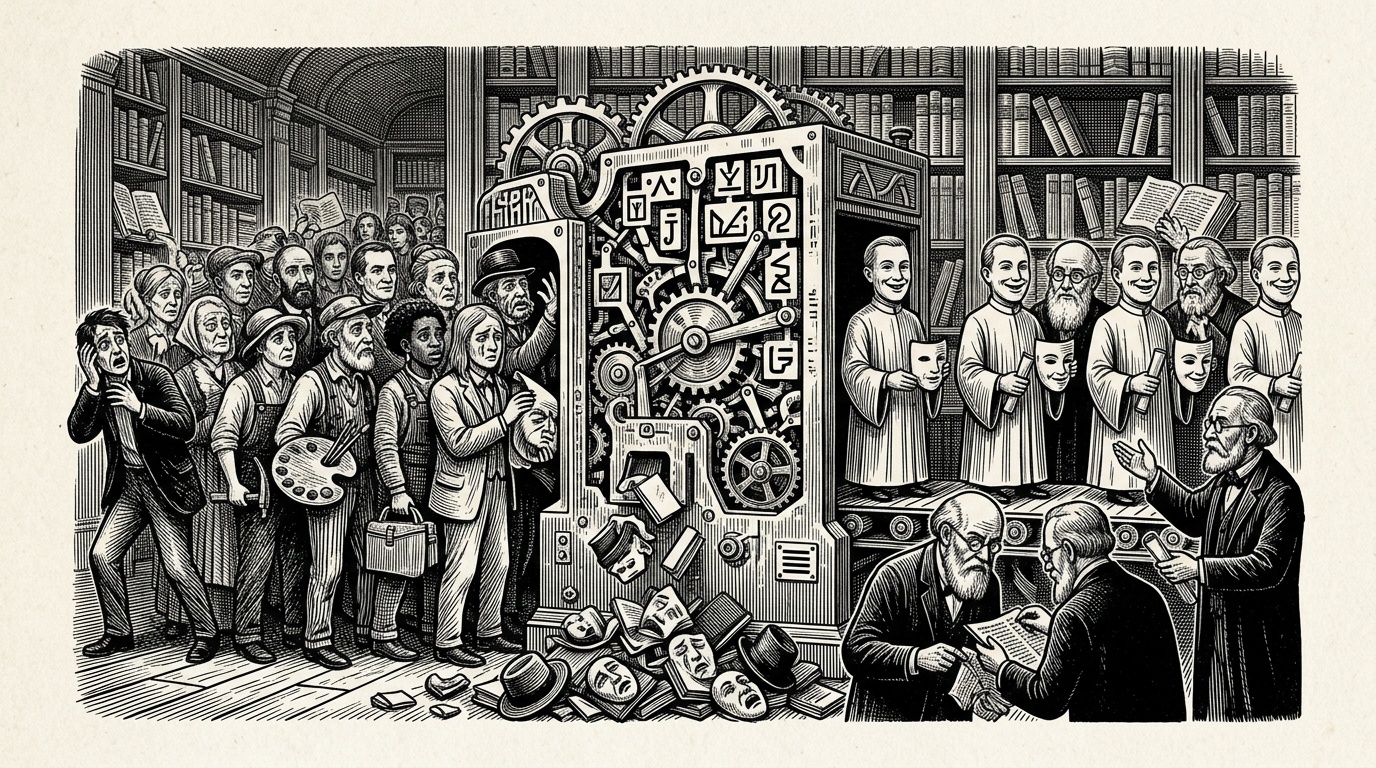

- LLMs exhibit a convergent structural bias the authors term the "positive average person" effect: simulated users are hyper-active (doing more than real people), persona-homogenized (individual differences collapse), and Utopian-biased (skewing toward positive outcomes and choices).

- Long-tail behaviors — the uncommon but authentic patterns that distinguish real individuals — are systematically lost in LLM simulations.

Why it matters

User simulation is increasingly relied upon for recommendation systems, social science research, and product testing. If LLMs used as simulators systematically erase individual variation and skew toward optimistic, hyperactive behavior, downstream applications built on these simulations will inherit these distortions. The identification of specific structural biases — rather than just aggregate accuracy metrics — gives the field concrete targets for improvement and raises caution about deploying LLM-based simulators as stand-ins for real user studies.

Caveats

The paper establishes the existence of structural biases but does not propose solutions to correct them. The specific real-world data sources and their demographic coverage are not detailed in the abstract, so the generalizability of the benchmark across populations and cultures remains unclear. Additionally, while the authors show performance plateaus with expanding context windows, the underlying mechanisms driving this ceiling — whether architectural, training-related, or data-related — are not fully disentangled.