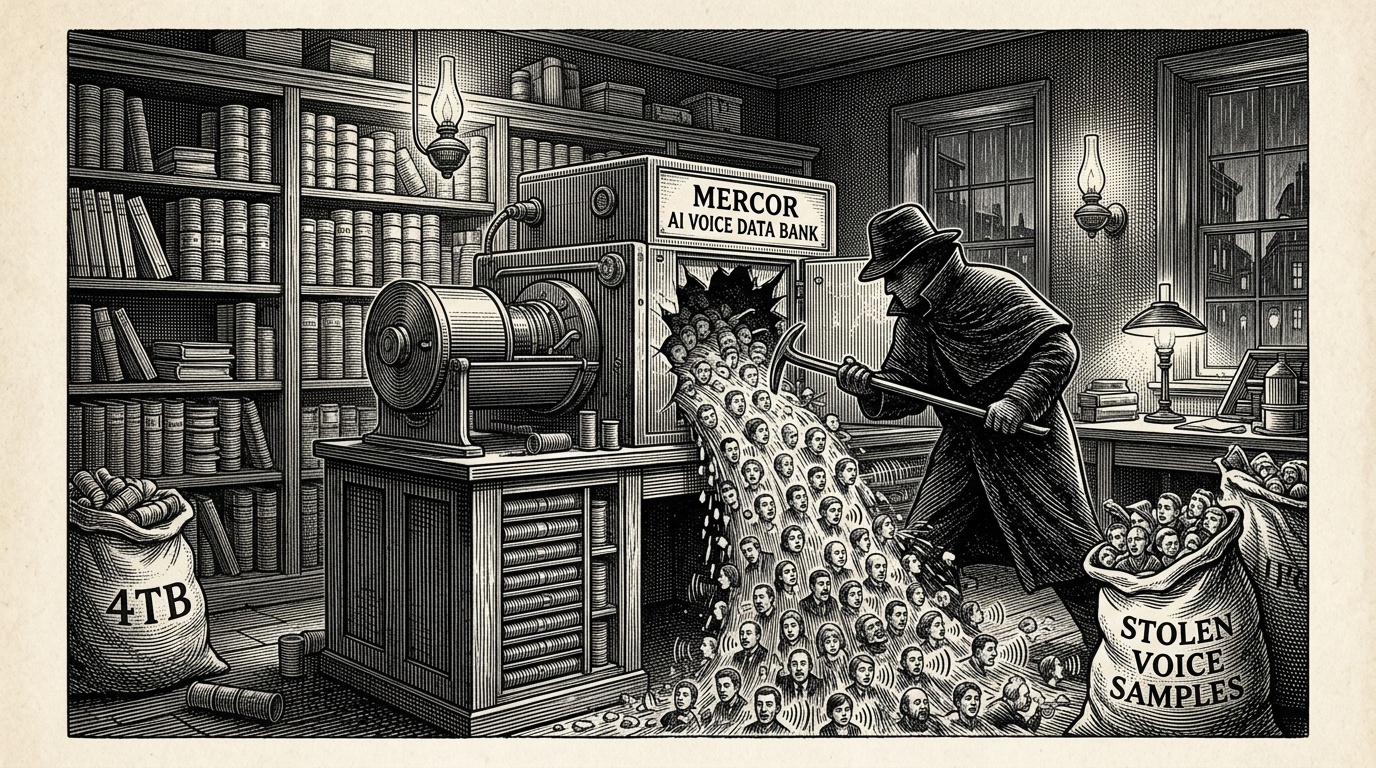

A significant data breach has struck Mercor, an AI talent platform, with attackers stealing approximately 4 terabytes of voice samples collected from roughly 40,000 contractors who were hired to generate training data for artificial intelligence systems.

A major cybersecurity incident at Mercor, a platform that connects freelance contractors with AI companies seeking training data, has resulted in the theft of 4 terabytes of voice recordings from tens of thousands of workers, according to reports emerging in late April 2026.

The breach affects approximately 40,000 individuals who had contributed voice samples as part of AI data labelling and annotation work — a rapidly growing segment of the gig economy that supplies the raw human-generated data used to train large language models and voice recognition systems.

What Was Stolen

The compromised data consists primarily of voice recordings, which are considered sensitive biometric information. Unlike passwords, biometric data cannot be changed once exposed, raising serious long-term privacy concerns for those affected. Voice samples can potentially be used to clone a person's voice, bypass voice-based authentication systems, or enable targeted social engineering attacks.

Mercor has not yet publicly detailed the full scope of the breach, how the attackers gained access, or what security measures were in place at the time of the incident. The company has not confirmed whether personally identifiable information beyond the voice recordings — such as names, addresses, or payment details — was also taken.

The AI Data Supply Chain Under Scrutiny

The incident highlights a systemic vulnerability in the AI industry's data supply chain. Platforms like Mercor sit at a critical junction between AI developers, who need vast quantities of labelled training data, and a distributed workforce of freelance contractors around the world.

These workers are often required to submit biometric data — including voice, facial images, and written samples — as part of their work. Yet cybersecurity protections at many of these intermediary platforms have not always kept pace with the sensitivity of the data they handle.

The AI training data industry has grown rapidly alongside the boom in generative AI, with companies racing to gather diverse, high-quality datasets. This urgency has sometimes meant that data governance and security practices have lagged behind commercial pressures.

Response and Notification

As of the time of publication, it is unclear whether affected contractors have been directly notified of the breach. Depending on where those contractors are located, the incident may trigger notification obligations under privacy regulations including the EU's General Data Protection Regulation (GDPR), the California Consumer Privacy Act (CCPA), and similar laws in other jurisdictions.

Cybersecurity experts are urging those who worked with Mercor to monitor for unusual activity, particularly any attempts to use voice-based verification or impersonation scams that could exploit stolen audio data.

Analysis

Why This Matters

- Voice data is biometric — it cannot be reset like a password, meaning the 40,000 affected contractors face a potentially permanent privacy risk that could enable voice cloning or authentication bypass for years to come.

- The breach exposes a critical and underexamined security weakness in the AI industry's data supply chain, where third-party platforms handling sensitive biometric data may lack adequate protections.

- Regulators in the EU, US, and elsewhere may use this incident to sharpen scrutiny of how AI training data platforms collect, store, and protect biometric information from gig workers.

Background

The AI boom of the early 2020s created enormous demand for human-generated training data — voice recordings, text annotations, image labels — supplied by millions of gig workers through platforms such as Scale AI, Appen, Remotasks, and newer entrants like Mercor. These companies act as intermediaries, recruiting contractors globally to perform micro-tasks that machine learning models require at scale.

While the major AI labs have faced scrutiny over their data practices, less attention has been paid to the security posture of the intermediary platforms that actually collect and store sensitive worker data. Biometric data — including voice, facial images, and fingerprints — has become increasingly common in these workflows as AI developers seek to train systems capable of recognising and replicating human characteristics.

Data breaches involving biometric information are considered particularly serious by privacy regulators. The US does not have a comprehensive federal biometric privacy law, though states like Illinois (BIPA) and Texas have enacted protections. The EU's GDPR classifies biometric data as a special category requiring heightened protection and explicit consent.

Key Perspectives

Affected Contractors: Workers who contributed voice samples face unique harms: their biometric data is now potentially in criminal hands with no ability to revoke or change it. Many may be in developing countries with limited legal recourse against a US-based platform.

AI Industry: Companies that rely on data labelling platforms will face questions about their vendor due diligence. If Mercor was collecting data on behalf of named AI clients, those clients may also face regulatory exposure depending on contractual arrangements.

Critics/Skeptics: Privacy advocates have long warned that the AI training data industry's rapid growth has outpaced its security and ethical frameworks. This breach may validate those concerns and intensify calls for mandatory security audits of platforms handling biometric data, as well as greater transparency about how contractor data is stored and for how long.

What to Watch

- Whether Mercor publishes a formal breach disclosure detailing the attack vector, data exposed, and remediation steps — and how quickly it does so relative to legal obligations.

- Regulatory responses from data protection authorities, particularly in the EU, given GDPR's strict 72-hour breach notification requirement and heightened rules around biometric data.

- Whether AI companies that contracted with Mercor publicly acknowledge the incident and their own potential exposure or liability.