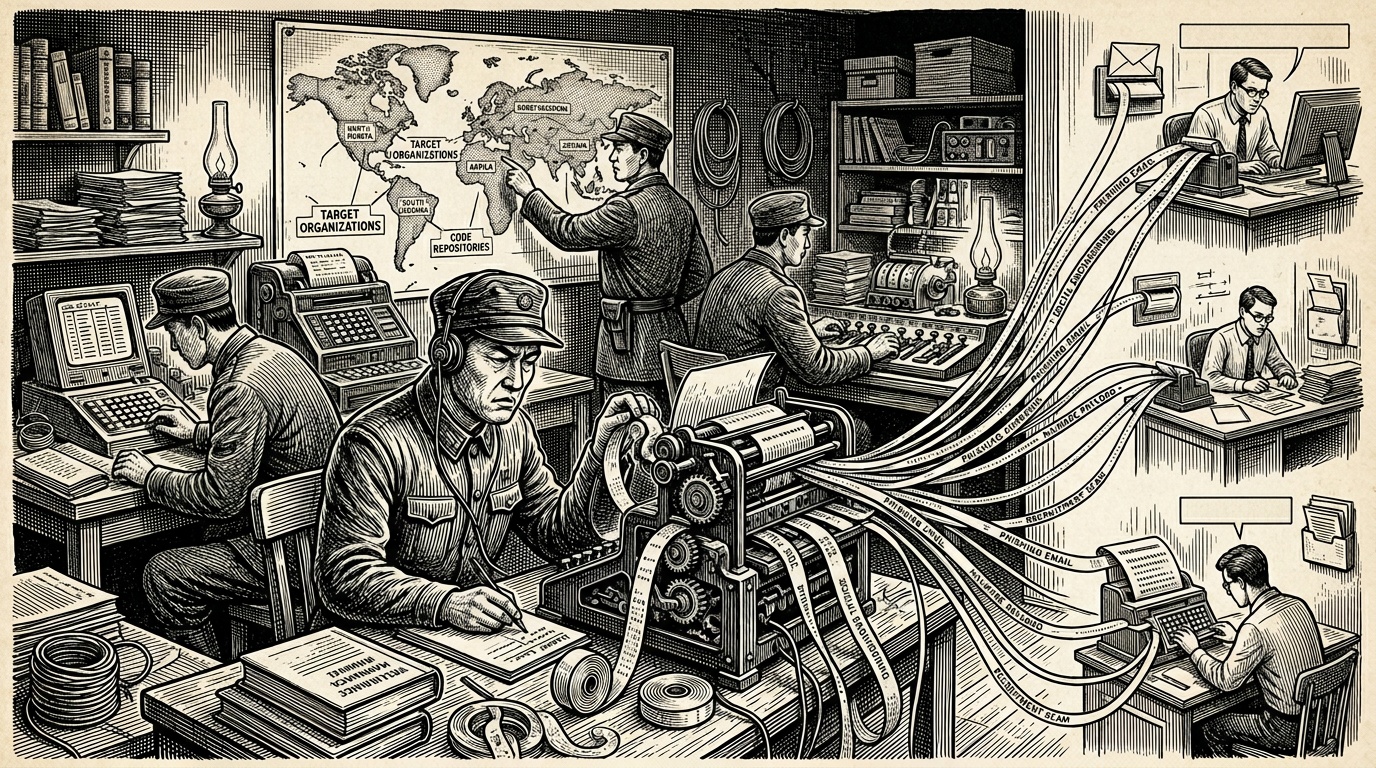

North Korea's Lazarus Group, one of the world's most prolific state-sponsored hacking organisations, has adopted artificial intelligence tools to dramatically expand the scale and sophistication of its attacks on software developers, according to research published by cybersecurity company Expel.

The report outlines how Lazarus — long linked to the North Korean government and responsible for billions of dollars in cryptocurrency theft and corporate espionage — is now using AI to automate the creation of fake personas, craft convincing phishing messages, and generate malicious code at a speed and volume previously impossible for human operators alone.

Targeting Developers Directly

Developers have become a high-value target for the group due to their privileged access to codebases, cloud infrastructure, and financial systems. Lazarus operatives reportedly pose as recruiters, open-source collaborators, or fellow engineers on platforms including LinkedIn, GitHub, and freelance job boards.

Once contact is established, targets are typically lured into downloading malicious code disguised as job interview tests, technical assessments, or software packages. AI appears to be accelerating the group's ability to personalise these lures at scale, making them significantly harder to detect.

AI as a Force Multiplier

Expel's analysis suggests that AI is functioning as a force multiplier for Lazarus, allowing a relatively small team of operators to conduct what would previously have required a much larger workforce. The tools reportedly assist in generating believable cover identities, translating communications into fluent English, and rapidly adapting attack strategies in response to failed attempts.

This shift represents a meaningful evolution in the threat landscape. Whereas earlier Lazarus campaigns relied on labour-intensive manual targeting, AI integration allows the group to run parallel campaigns against many victims simultaneously.

Broader Context

The Lazarus Group is believed to operate under the direction of North Korea's Reconnaissance General Bureau and has previously been attributed responsibility for the 2014 Sony Pictures hack, the 2016 Bangladesh Bank heist, and the 2022 Ronin Network cryptocurrency theft — the largest crypto hack in history at approximately $620 million.

Cybersecurity researchers have noted a growing trend of nation-state actors adopting commercially available AI tools to enhance offensive capabilities, a development that is compressing the skill gap between sophisticated state actors and less-resourced groups.

Expel advises developers to treat unsolicited outreach from recruiters or collaborators with heightened scepticism, avoid running code from unknown sources, and adopt strong endpoint security practices. Organisations are encouraged to monitor for unusual outbound network activity that may indicate compromised developer machines.