What they did

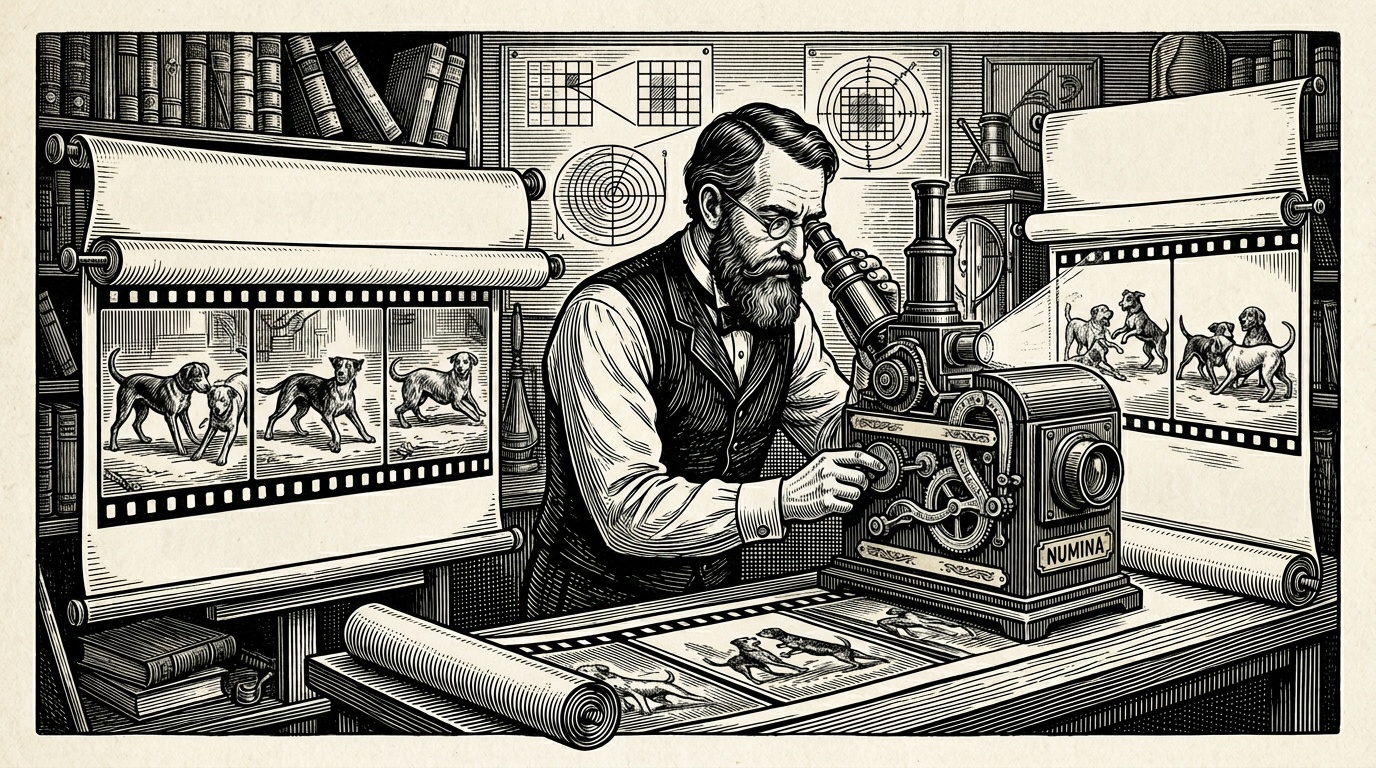

The authors developed NUMINA, a plug-in framework for existing text-to-video diffusion models that requires no parameter updates. The system works in two stages: first, it selects discriminative self-attention and cross-attention heads to construct a 'countable latent layout' — a spatial map representing how many discrete object instances are present in the current generation. It then compares this layout against the numeric specification in the prompt to detect inconsistencies.

When a mismatch is found, NUMINA conservatively refines the latent layout and modulates cross-attention maps to steer subsequent denoising steps toward the correct count. The authors evaluated the method on a newly introduced benchmark, CountBench, using Wan2.1 models at 1.3B, 5B, and 14B parameter scales.

Key findings

- Counting accuracy improved by 7.4% on Wan2.1-1.3B, 4.9% on the 5B model, and 5.5% on the 14B model relative to unmodified baselines.

- CLIP alignment scores — measuring overall prompt–video semantic correspondence — improved alongside counting accuracy, suggesting the intervention does not sacrifice general prompt fidelity.

- Temporal consistency was maintained, meaning the corrections did not introduce visible flickering or frame-to-frame incoherence.

- The authors find that structural layout guidance is complementary to, not a replacement for, seed search and prompt engineering strategies.

Why it matters

Numerical alignment is a persistent and underexplored failure mode in generative video models. A training-free approach is practically significant because it can be applied to commercial or large-scale models whose weights are not freely modifiable. The attention-head selection methodology also offers a potentially transferable technique for diagnosing other structural failures in diffusion generation.

Caveats

All experiments are conducted on a single model family (Wan2.1), so generalizability to architecturally distinct video diffusion models is untested. CountBench is introduced by the same authors, raising questions about benchmark design independence. The evaluation focuses on relatively small object counts; performance on larger or more complex count targets is not characterized. The conservative layout refinement strategy may limit improvements in cases where the initial layout is severely incorrect.